Article category: Company News

Descartes Labs Goes All-in on AWS to Help Organizations...

Descartes Labs migrates to AWS infrastructure to rapidly analyze geospatial data for timely...

Article category: Science & Technology

At Descartes Labs, we’ve been working at scale for years to solve some of the world’s hardest and most important geospatial AI challenges. Our custom solutions have helped global enterprises transform their physical supply chains to become more efficient and profitable.

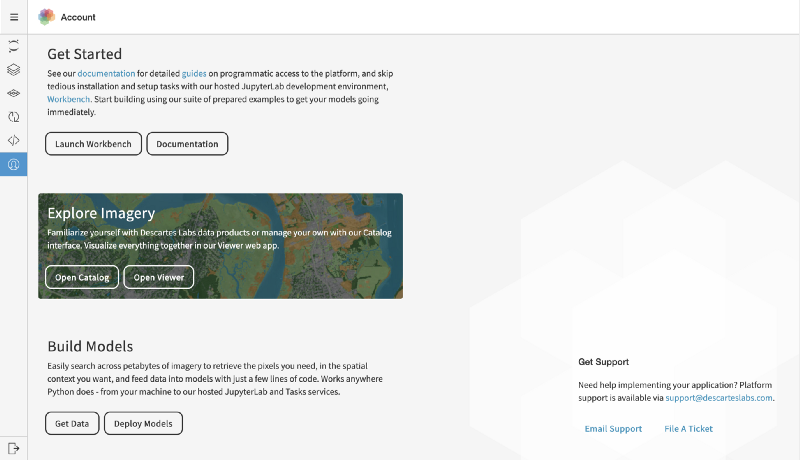

After years of refinement, we’re now making our platform and tools publicly available so that customers can build models to transform businesses more quickly, efficiently, and cost-effectively.

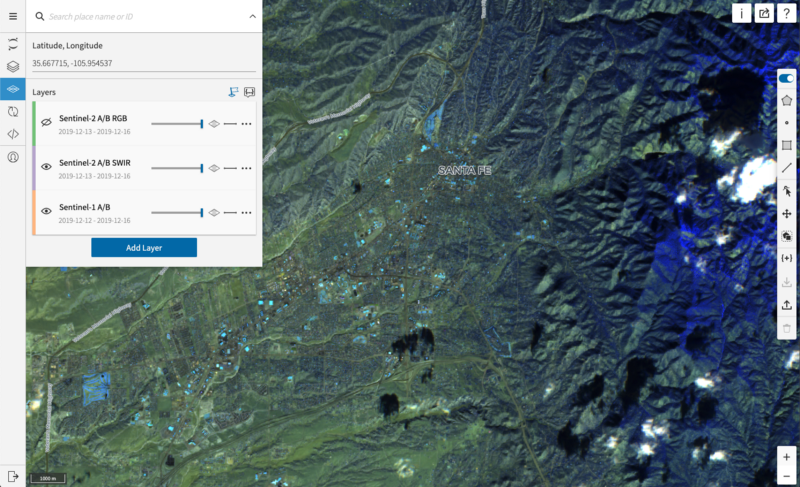

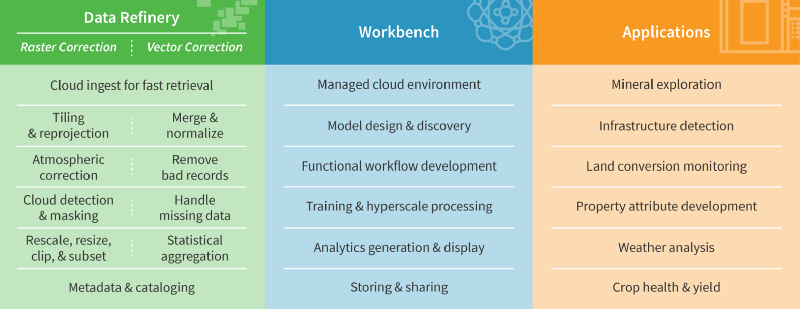

The Descartes Labs Platform is made up of three components that work together to accelerate productivity across IT, engineering, data science, and business leadership.

The Descartes Labs Platform turns AI into a core competency by giving data scientists the best geospatial data and modeling tools in one complete package. We’ve built the scaling infrastructure that lets your data science team design models faster than ever before, using our massive data archive or your own.

“There’s zero pre-processing required with the DL Platform. I can query the API and get a NumPy array processed and ready for analysis in seconds.”—Alice Durieux, Applied Scientist, Forestry and Climate

One can think of geospatial AI as a set of tools and workflows that help predict events and phenomena throughout the physical world. The drive for continuous improvement in physical forecasting and prediction has become a primary goal for producers and consumers across the full spectrum of global trade. This is especially true in 2020 as companies face more rigorous sustainability and efficiency challenges from input materials, manufacturing, or downstream product use.

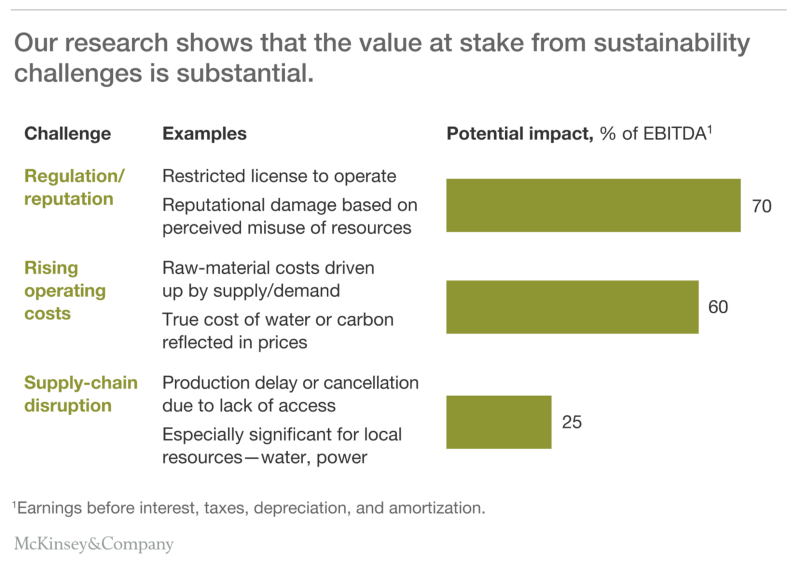

In many cases, sustainability and efficiency are two sides of the same coin. Companies often find it difficult to generate revenue and improve margins without making progress on both fronts. This is evident in research from McKinsey below which shows substantial earnings value is at stake from corporate sustainability challenges.

With risks like this, it’s imperative to pay close attention to patterns in the physical world. Natural resources and supply chain management have become more global, competitive, and increasingly subject to sustainable investing standards. The rise of climate-related financial risk investing is changing incentives for public companies. At the same time, supply and demand shocks are being priced-in more rapidly and volatility is lower in today’s highly connected global environment.

Adverse weather and changing environmental conditions make the picture even more opaque. They pose risks to physical assets and increase the need for more accurate assessments of insured value. With as many holes as the global picture has, these uncertainties are magnified when it comes to the impact on your market, the value of your products, your costs, and the risks to your bottom line.

Most critically, the opportunity to decide and act is dramatically shorter today than even just a few years ago. The market is learning faster and faster. What tools do you use to discover information before your competitors? How do your users make sense of all the data flowing past? How can you predict how market conditions will evolve over the next hour, the next day, month, quarter, or year?

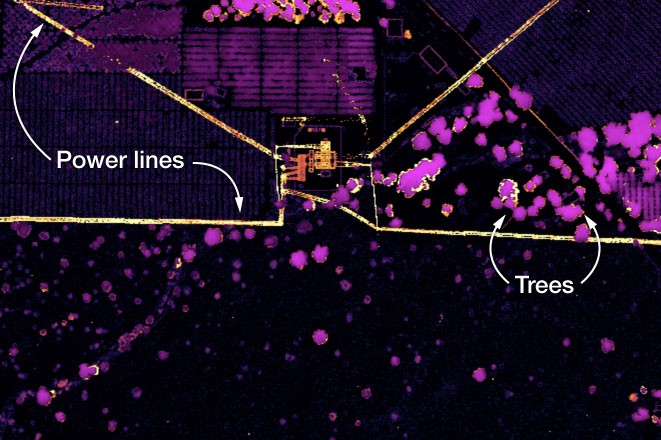

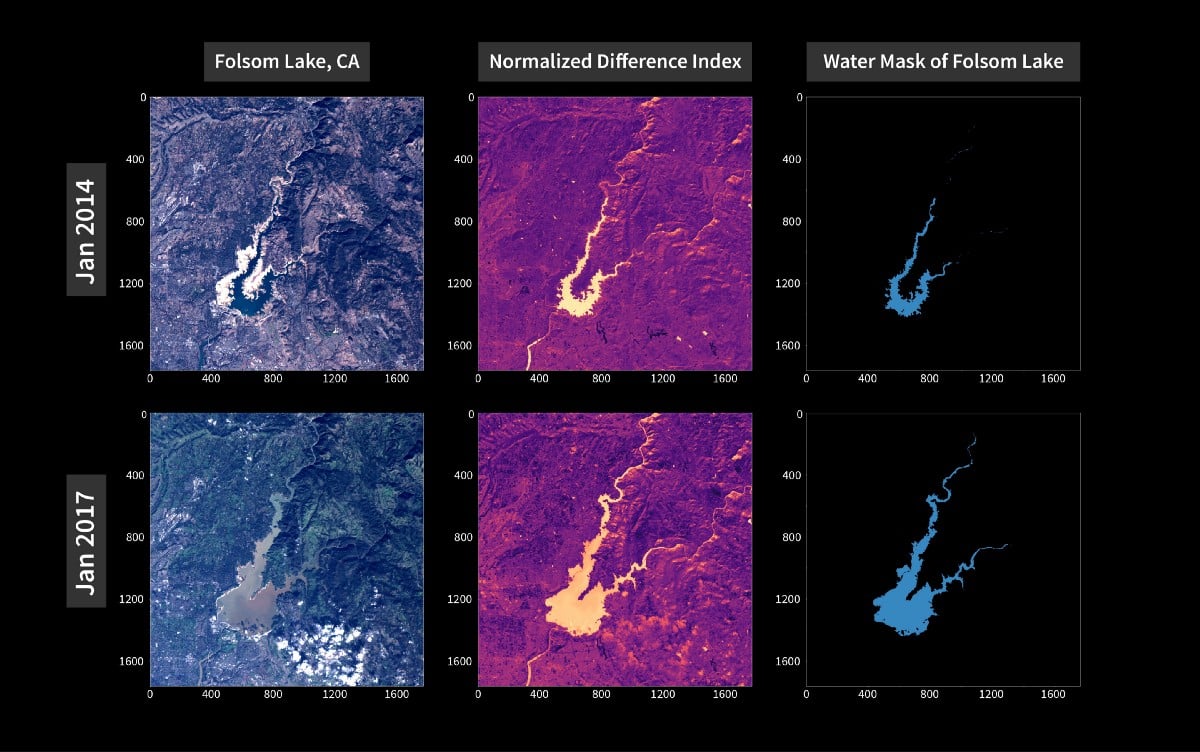

Geospatial AI can help resolve these challenges, but the underlying datasets are massive and highly technical and require specialized tools and knowledge. Data science teams universally report that the number one factor that slows them down is data readiness, and nowhere is this truer than with geospatial data.

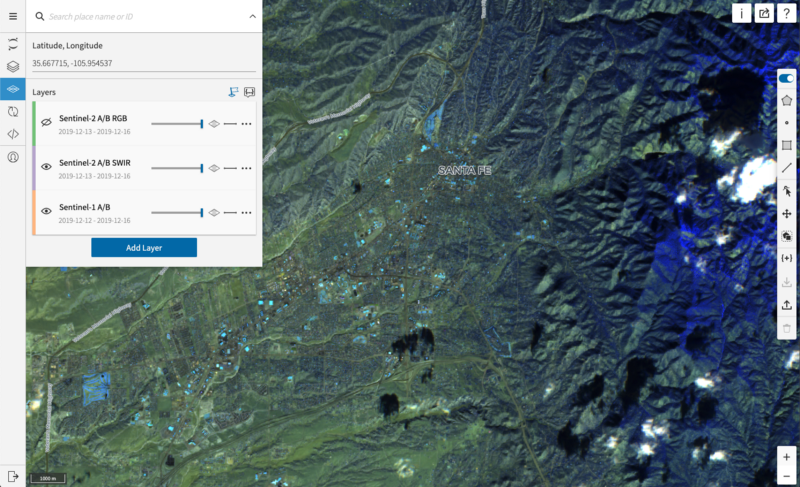

This is why we built the Descartes Labs Platform. It includes a data refinery with the processing and computational scale that rivals that of major technology giants, combining a diverse catalog of geospatial datasets with your own private data archives. It also offers modeling tools designed around the scientific method, with machine learning workflows purpose-built for large-scale complex global systems.

Organizations use the Descartes Labs Platform to securely scale computer vision, statistical, and machine learning models in a rapid manner, transforming business decisions and handling nearly all essential modeling functions with one solution in the cloud.

The platform provides Python access to Descartes Labs’ APIs including Catalog, Raster, Scenes, Tasks, Workflows, Monitoring and more. All of which enables the rapid and collaborative development of global-scale commodity and earth systems analytics.

Imagine if your business units could quickly ask a question about the impact of a weather event, or hypothesize about the relationship between a supply factor and the movement of the market? Or simply understand the total extent of production globally?

Or what if your data science team could quickly reference petabytes of earth observation data in milliseconds, or ingest your own datasets that haven’t been properly leveraged in the past? What if you could form a hypothesis with your data science team and get a sense of what’s possible in less than 24 hours?

At Descartes Labs, our goal is to help our customers increase “speed to signal.” And today with the Descartes Labs Platform, we’re giving business and technology teams the power to build near-real-time monitoring and forecasting capabilities, allowing them to model the world that surrounds us all.

Listen to our platform launch webinar or drop us a line to discuss how the Descartes Labs Platform can accelerate productivity across your enterprise.

Article category: Company News

Descartes Labs migrates to AWS infrastructure to rapidly analyze geospatial data for timely...

Article category: Climate Solutions

Technology and risk-based modeling may soon put the world on a path to eliminate the concept of...

Article category: Science & Technology

From a technological standpoint, the adoption of artificial intelligence (AI) into organizations’...

Article category: Company News

There’s never a dull moment over here at Descartes Labs. Between hosting the 2030 Governor’s Energy...